Toward governance of artificial intelligence in pediatric healthcare

Overview of governance in AI and healthcare

Governance can be broadly considered as the system of rules that dictate and ensure safe and effective use of a given tool, such as a SaMD. Here, we consider governance, as it pertains to AI, to be the systems, policies, and processes designed to guide, regulate, and oversee the development, deployment, and use of AI to ensure it is fair, appropriate, valid, effective, and safe (FAVES)35.

Governance in AI is critical to align the technology with ethical use, equitable access, sociotechnical values, well-being, and human rights21. To address AI Governance, there are a multitude of national and international AI governance frameworks, including more recent ones with a focus on children36. These are transnational approaches that focus on multiple sectors predominantly outside of healthcare, resulting in high-level and generalized recommendations that are not specific to AI governance in pediatrics and children’s health.

Pediatric-specific concerns

Developmental changes and biophysical growth over time

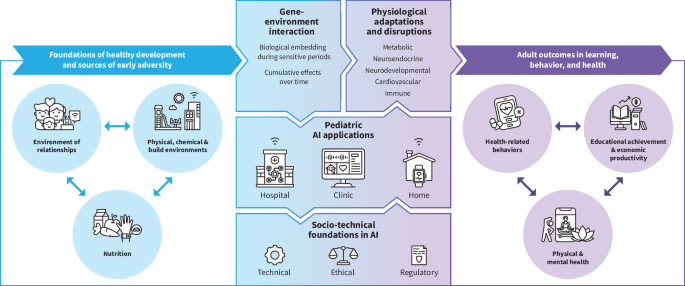

Children’s health is uniquely intertwined with biodevelopment (Fig. 3)37. Biodevelopment of children refers to the environment of relationships in which a child develops, the physiologic adaptation and response to that environment, and ultimately the cumulative impact of this foundation on outcomes in learning, behavior, and health. This evolving physiology and cognitive maturity vary widely between children, more so if there are major environmental and/or genetic disruptions that result in gaps between chronological and developmental age. The result of these differences is a heterogeneous population, within which exist several sub-cohorts with both common and disparate data distributions. Take for example, the population of adolescent girls: language development in this population would be expected to fall within a general normal range, whereas growth varies substantially with the presence of menarche. Pediatric drugs and devices have unique regulatory, life cycle management, and governance policies to account for this38. AI governance policies must be similarly specific to pediatrics, ensuring that the substantial impact of biodevelopment is considered in the construction and implementation of these models.

Stakeholder roles

There are many stakeholders who play critical roles in the development, validation, implementation, and use of pediatric AI tools. The role of the pediatric patient themselves is a unique complexity in advancing AI research in pediatrics, as a child’s ability to consent varies with their development. Incorporation of pediatric patients into the design of the tools that will impact them is their right, as enshrined by the 1989 United Nations Convention on the Rights of the Child39. Furthermore, pediatric patients have expressed interest in being involved in AI research and integration40, and children’s buy-in can be influential in successful model implementation41. That said, the majority of pediatric patients are unable to fully assess risks and benefits. In pediatrics, this is often addressed by applying the “best interest standard”, which is the idea that when patient’s wishes are not known, their best interests should be used to protect their welfare42. This core principle of pediatric ethics is sometimes criticized for paternalistic presumptions, but in AI governance, the best interest standard, at the very least, mandates that stakeholders with a vested interest in and specialized knowledge of children should be included in governance. Specifically, end users with diverse backgrounds, including health care practitioners as well as patients and their families, should be engaged in AI design, development, and validation43. Thus, successful co-design with children is often quite challenging, as it requires consideration of the developmental context of the pediatric patient as well as involvement of their caregivers and their communities44.

Within the clinical landscape, stakeholders, including physicians, nurses, clinics, hospital systems, ancillary staff, and other clinical collaborators, must be considered so that AI tools are not only relevant but are also additive to existing clinical workflows. Payors and industry are also necessary agents, given the financial requirements of developing and implementing these technologies, but such involvement of private sector financing also raises the concern for conflicts of interest. This further underlines the essential role of policymakers, ethicists, and advocacy groups in ensuring the safety of the whole process from inception to deployment, through rigorous guidelines, standards, and best practices.

The interests and roles of so many parties lead to a complex and often contradictory web that can be difficult to navigate, thus necessitating transparent communication and collaboration between each player to create safe, meaningful, and lasting adoption of pediatric AI. Efforts like Health@Home and the international Children’s Advocacy Network (iCAN) are examples of sustained efforts that facilitate conversations between all pediatric stakeholders38,45,46.

Consent and assent

Pediatric AI also requires specific considerations for informed consent, assent, and non-dissent (i.e., “opt-out”)47. For research, consent must be obtained from parents or legal guardians. Assent must be obtained from children if they can meaningfully understand what is being asked of them. However, accurately determining when a child is ready to provide meaningful assent is challenging, as developmental and chronological ages can differ significantly. This may result in barriers like additional time needed to appropriately consent families to research trials, which further decreases the sample sizes needed to develop robust AI. That said, many AI tools are deployed outside of research studies48, resulting in patients being unaware that such AI tools are being used in their clinical care. In pediatrics, >90% of parents have indicated across multiple surveys that they would like to be informed of the use of AI tools in clinical decision making49. Data privacy, access, and ownership, as well as how these change at 18 years of age, must also be considered.

Data scarcity and security concerns

Data used for training and evaluating AI in pediatrics necessitates a tailored governance framework. Most children are healthy, and even common pediatric diseases are individually relatively rare. This means that the training and evaluation of models will occur on smaller datasets or require data sharing across collaborative networks. In our work on video AI, we obtained pediatric video data at a 10-fold slower rate compared to adult data, but the pediatric models needed significantly more fine-tuning than comparable adult algorithms that could be deployed “out-of-the-box”11,50. Recognizing this challenge, regulatory bodies such as the FDA increasingly permit the use of Real-World Evidence (RWE) to supplement traditional clinical trials in regulatory submissions, aiming to mitigate data gaps arising from limited pediatric-specific clinical datasets38,51. Pediatric patient data is inherently different than that of adults, and as such requires specific consideration when handling52. The privacy concerns of pediatric patients are similarly complex and evolve over time, with the risk to confidentiality increasing with the amount and different data types being shared53. Furthermore, the impact of data breaches is inherently higher because there is a longer period in which the data could be misused. There is a valid concern that these risks are poorly understood, particularly when the caregivers of patients, rather than the patients themselves, are providing consent. As such, it is unsurprising that privacy ranks at the top of parental concerns when it comes to the use of AI in medicine54. Thus, there is a mandate for data sharing standards.

Impact of errors

Finally, incorrect medical decisions as a result of using AI can be consequential and potentially detrimental in ways specific to pediatrics. Adverse events like a delayed diagnosis or mismanagement can reduce decades of quality-adjusted life years. There is already mistrust of healthcare AI among underrepresented minority groups, and any mistakes could further erode confidence in AI55. Off-label use is common in pediatrics, which has the potential to be very problematic when AI is used56, where slight distributional shifts in data can have a substantial impact on predictions.

Overall, pediatric AI requires specialized approaches due to children’s unique biodevelopmental trajectories, data scarcity and privacy considerations, involvement of parents/guardians in decision-making, and disproportionate consequences of AI-related errors. Previously published adult-focused AI governance frameworks are inadequate to address this, as are children’s AI governance frameworks that are not specific to healthcare data.

Core principles underpinning governance in pediatric AI

Governance in pediatric AI demands a tailored approach that incorporates robust ethical foundations, children’s rights principles, and healthcare AI. One such ethical approach is a heuristic framework to assess the responsible conduct of research, recently applied to pediatric AI57,58. It is based on answering three questions: Is it true? Is it good? Is it wise?57,58

The ethical principle of truthfulness encompasses the accuracy, reproducibility, and verifiability of AI. Algorithms must be developed from comprehensive pediatric population data, or be very clear on limitations there-in43. Numerous studies have demonstrated differing AI performance in different hospitals, population groups, and units59,60. One example is the Epic Sepsis Model, a widely implemented proprietary model that generated sepsis alerts on 18% of all patients while failing to detect 67% of patients with sepsis48. A comprehensive evaluation of truthfulness must occur prior to clinical use of AI systems43. Measures of correctness, dependability, and verifiability need to be clearly defined and transparently advertised for every deployment environment61. This is crucial because model performance may decay over time or generalize poorly, unbeknownst to patients or providers62,63. There is increasing recognition that many models must be trained, calibrated, and monitored locally48,64. AI-enabled devices under FDA purview are required to have a post-market performance monitoring plan, though the FDA acknowledges this may not be possible in all environments65. However, many models are not regulated by the FDA, and there is no agreed-upon evaluation framework for AI, so current approaches are often ad hoc through academic publications35,63,66. Finally, AI clinical deployment must be transparent and explainable for both clinicians and caregivers, such that all parties can understand when AI is used and the results are applicable67. In pediatrics, this is particularly critical, as the biggest parental concern regarding AI systems is that they will make diagnostic or therapeutic mistakes49,55. Therefore, it is beneficial to develop transparent and explainable AI algorithms, even if it perform worse than a non-interpretable model68, so all parties can understand when AI is used and if the results are applicable.

Proposed Pediatric AI systems must be good, improving healthcare delivery and benefiting patients. Beneficence and non-maleficence necessitate that AI-driven interventions enhance well-being and minimize harm. AI interventions must target outcomes that matter for children and/or their guardians, which can differ from those that may be prioritized clinically69. For example, many parents view the diversity of neurodevelopmental outcomes differently from typical composite clinical outcomes that are used for trials and to train AI algorithms70. The AI system needs to respect autonomy and human rights71. In pediatrics, this requires incorporation of developmentally appropriate assent and consent, upholding rights, and avoidance of infringing on the rights of others. There is a growing body of literature, primarily from international non-governmental organizations, suggesting that AI systems are not designed in such a way to specifically consider children’s rights and wellbeing23. Even within pediatrics, there are markedly different considerations for each age group: teenagers have increasing degrees of independent decision making, while the wishes of neonates are unknowable. Data security and privacy a critical concerns in implementing AI systems, especially among health system leadership72. Integrating data from multiple sources within and outside of the health system, including school records, developmental assessments, family reports, and genetic databases, has benefits in reducing AI bias but further introduces data security and privacy risks unique to pediatrics. Data management strategies should account for data types, structures, sources, and access that are unique to each age group and practice context within pediatrics. Data storage platforms, which are increasingly cloud-based, should provide transparent, auditable records of access and use73. Another risk associated with the implementation of AI is the propagation of biases due to a variety of factors (e.g., race/ethnicity, sex, sociodemographic factors) as well as factors specific to pediatrics, including variations in chronologic and developmental age. AI systems must adhere to the ethical principle of justice to be fair and equitable.

Governance in pediatric AI must also evaluate if the system is wise– are the right people evaluating the system, and are they asking the right questions? This is likely the most challenging standard to asses,s but generally necessitates a commitment to algorithmovigilance, the “scientific methods and activities relating to the evaluation, monitoring, understanding, and prevention of adverse effects of algorithms in health care.”74 In pediatrics, this intersects with the “best interest standard” to protect children’s welfare when their wishes are not known, thereby mandating stakeholder involvement42. There is an additional ethical burden on designers, guardians, and other stakeholders to ensure just application of AI, beneficence, and absence of malfeasance in the AI designer’s intent43. Overall, this necessitates a dynamic, resilient governance approach that shifts away from prior siloed models towards an embrace of governance models that incorporate cross-sectional input and account for the rapid diffusion of AI in society75.

Fundamentally, pediatric AI governance should aim to protect (“do no harm”) and provide for children (“do good”) while adhering to autonomy and respect for persons23. This will help ensure that developmentally appropriate systems are ethically designed and deployed to prioritize children’s rights, safety, and well-being, while fostering equitable and inclusive healthcare outcomes in alignment with broader societal values.

Current governance models and regulatory approaches

In this section, we tabulate and discuss existing governance frameworks that apply to pediatric AI (Table 1). We present standards and regulations from the following sources: (1) international or national regulatory bodies, (2) institutional and professional organizations, (3) industry-based initiatives, and 4) advocacy and community-based initiatives. We recognize that these categories are neither all-encompassing nor are they necessarily operating independently. For example, “AI, Children’s Rights & Wellbeing: Transnational Frameworks” is a product of collaboration between The Alan Turing Institute (a UK national institute), UNICEF, Council of Europe, and others36. Nonetheless, this organization suits our aim of providing a summary of the existing models and approaches to governing pediatric AI. Furthermore, we prioritized relevance to pediatric healthcare rather than ensuring an exhaustive review of all AI governance efforts. For example, the Organization for Economic Co-operation and Development (OECD) provides a series of principles that outline ethical AI implementation and have had success in multinational adherence76. While these principles broadly apply to children, there are limited considerations specific to child healthcare, and so this document is not included within the scope of this review.

link